Autonomous weapons

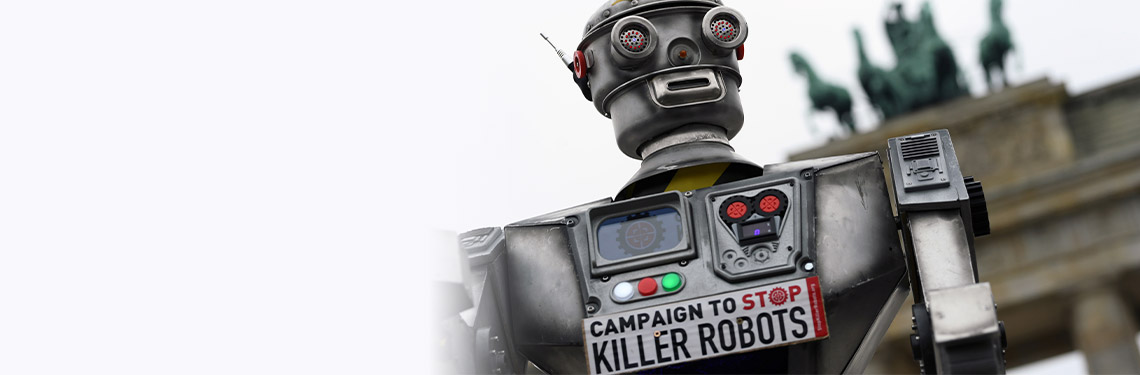

In all areas, astonishing advances in technology are presenting significant legal and regulatory challenges – none more serious than the use of military AI.

When Professor Stuart Russell – founder of the Center for Human-Compatible Artificial Intelligence at University of California, Berkeley – gave the 2021 BBC Reith Lectures, he warned that urgent action was needed to prevent the development and deployment of military weaponry powered by artificial intelligence (AI).

While semi-autonomous weapons systems have been in development since the Second World War, Russell argued that recent technological advances meant that general purpose AI is only a short step away. In his view, general AI is a game-changer because it represents a move away from machines that can beat humans at chess or Go, for instance, and towards intelligent systems that can problem-solve and act on their decisions through the agency of robots. No humans needed.

Arms race

Automatic killer technology already exists. Weapons such as people-hunting drones can be made cheaply and quickly by groups of grad students, according to Russell. In fact, Turkish firm STM has developed a KARGU drone that is on sale today, which is ‘designed to provide tactical ISR and precision strike capabilities for ground troops’ according to STM’s website.

Naturally, the world's military powers are rapidly developing their AI-powered warfare capabilities. Sometimes news of such projects only leaks out accidentally. For instance, four years ago 3,100 Google workers reportedly objected to the company's involvement in a US Defense Department programme, dubbed Project Maven. The goal was to refine the object recognition software used by its drones – although Google said its technology was used for non-offensive purposes. Sometimes developments are used as public relations exercises by the military. For example, another US Air Force project – Golden Horde Vanguard – succeeded in demonstrating how small diameter bombs could receive and interpret new instructions mid-flight. Although the project was put on the back burner, it points to the wider development of highly-networked, autonomous weapons that can be controlled in battlefield situations.

Russell's concern is that once the armed forces are completely integrated, linking them to a general-purpose AI system will simply be a matter of a software upgrade.

Dr Alex Leveringhaus, Co-Director at the Centre for International Intervention at the University of Surrey, questions whether such AI-powered weaponry poses a special risk compared to other battle systems, but agrees that the threat of robotic warfare is real and dangerous. Such systems could, for example, take the war for resources into areas that are uninhabitable by humans – space, polar regions and deep oceans – creating fresh arenas for conflict and military dominance.

Conventional versus autonomous

The difference between conventional and autonomous weaponry has become an increasingly pressing issue over the past decade. Countries that belong to the United Nations Convention on Certain Conventional Weapons (CCW) have sat regularly on the issue since it was first tabled in 2013. They have agreed that international humanitarian law applies to these emerging technologies, although exactly how it applies is still far from decided.

Bonnie Docherty, a law lecturer and the associate director of armed conflict and civilian protection at Harvard Law School’s International Human Rights Clinic (IHRC), says that one crucial difference between autonomous and conventional weapons has been framed by the concept of control. To be lawful, lethal weapons must be under the control of humans – not algorithms.

Machines may not be able to pick up the sometimes-subtle behavioural distinctions between soldier and non-combatant

She argues that autonomous weapons struggle to be acceptable under international humanitarian law on four counts: distinction, proportionality, accountability and the so-called Martens Clause. In a paper for the organisation Human Rights Watch and IHRC (with which she is affiliated), Docherty explained that since modern combatants often hide in civilian areas, machines may not be able to pick up the sometimes-subtle behavioural distinctions between soldier and non-combatant. In addition, the proportionality of an attack, especially weighing potential civilian loss of life and military gain, requires human judgement.

‘Evaluating the proportionality of an attack involves more than a quantitative calculation’, says Docherty. ‘Commanders apply human judgment, informed by legal and moral norms and personal experience, to the specific situation.’

Machines are not accountable

Securing accountability for war crimes, for instance, runs into a familiar theme of this column – In distributed systems comprised of programmers, multiple hardware and software systems and civilian and military personnel, apportioning legal accountability is anything but straightforward. Docherty suggests that holding an operator to be responsible for the actions of an autonomous robot could ‘be legally challenging and arguably unfair’. But deciding how far up the command chain to go before you can apportion accountability is problematic.

In fact, the issue goes deeper than that. A paper published by the Stockholm International Peace Research Institute in 2021 raises the question of whether only humans are restrained by the provisions of international humanitarian laws, or whether autonomous weapons systems should also be considered agents under the provisions of those laws. CCW debates have been split on this issue regarding the broader implementation of humanitarian rules. In 2019, the UN's Group of Government Experts adopted Guiding Principle (d), which says: ‘Human responsibility for decisions on the use of weapons systems must be retained since accountability cannot be transferred to machines. This should be considered across the entire life cycle of the weapons system.’

But, as the report authors point out, while this appears to put the onus on the user of the weapon, it leaves unanswered the question of human agency versus machine agency. If a weapons system can be considered an agent, the basis of the current approach would need to be rethought.

Feel the fear

That may be one reason that critics such as Docherty cite the Martens Clause – Human Rights Watch has been one of the major advocates of this approach. It is a provision that appears in numerous international humanitarian legal documents and says that in the absence of a specific law on the topic, the rights of civilians are automatically protected by ‘the principles of humanity and dictates of public conscience’. That means that even if there is no agreement or specific laws surrounding the use of automated weaponry, the principles of protection remain in place.

Whether pleas for human agency to have the last say will be heard is open to question. But like all fast-moving technological areas, the law is lagging seriously behind – not least since digital and robotic technologies are blurring definitions on issues such as agency upon which laws have traditionally been built. Russell’s intervention was meant as a wake-up call to settle such matters with more urgency. ‘I think a little bit of fear is necessary’, he said, ‘because that's what makes you act now rather than acting when it's too late, which is, in fact, what we have done with the climate’.

Arthur Piper is a freelance journalist. He can be contacted at arthurpiper@mac.com

International Bar Association 2026 © Privacy policy Terms & conditions Cookie Settings Harassment policy

International Bar Association is incorporated as a Not-for-Profit Corporation under the laws of the State of New York in the United States of America and is registered with the Department of State of the State of New York with registration number 071114000655 - and the liability of its members is limited. Its registered address in New York is c/o Capitol Services Inc, 1218 Central Avenue, Suite 100, Albany, New York 12205.

The London office of International Bar Association is registered in England and Wales as a branch with registration number FC028342.